What Requires CIO and CISO Focus

In Part I, we covered four failure modes that derail AI projects before they deliver value.

Once an organization is ready to move forward, the next question is how to govern the deployment it is building. Governance investment is not uniform. A coding assistant used internally by developers carries a fundamentally different risk profile to an AI agent autonomously processing customer transactions. Treating them the same leads either to over-engineering low-risk deployments or under-protecting high-risk ones.

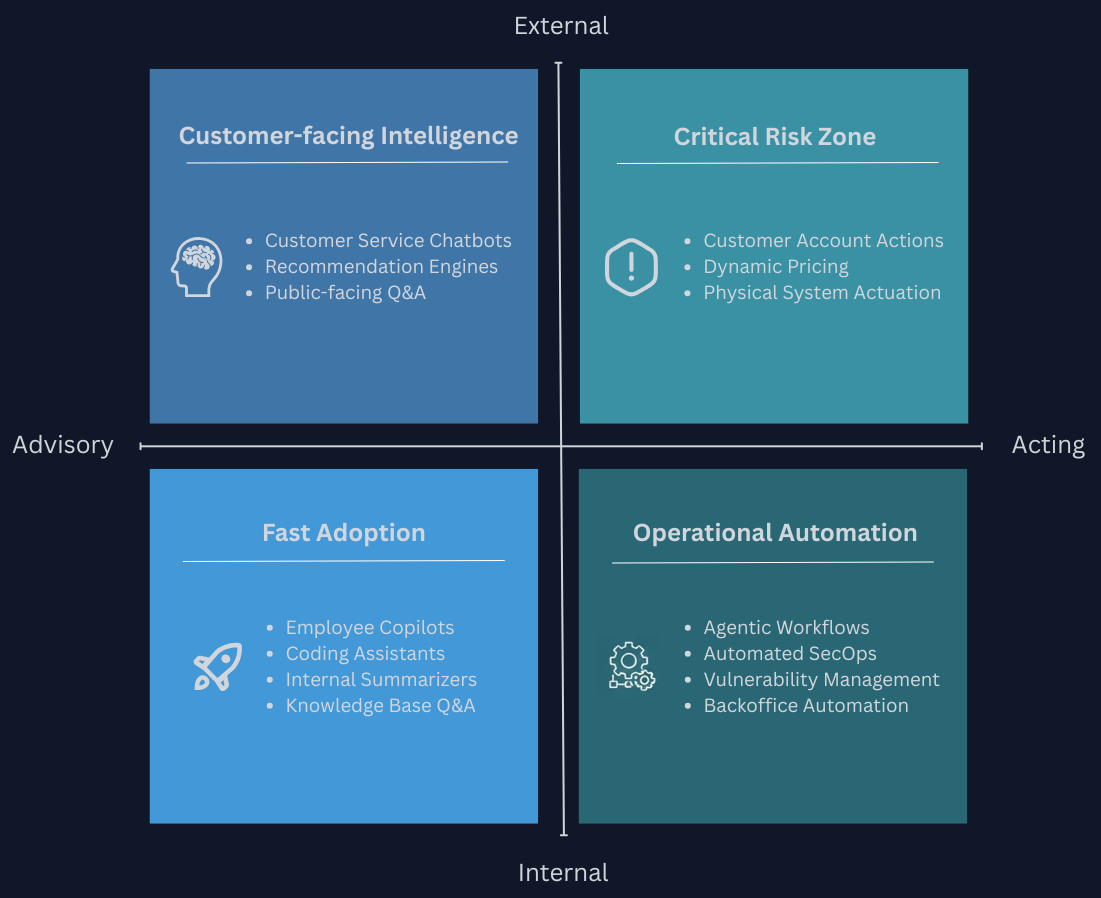

A useful frame to think about risk and required governance is a 2x2 across two dimensions:

- Is the AI primarily advisory (producing insights and recommendations) or is it acting (taking consequential action)?

- Is its impact primarily internal or external to the organization?

Quadrant 1 - Internal + Advisory: The Fast Adoption Zone

The lowest-risk quadrant. Output goes to an internal user who applies their own judgment before acting. The consequences of a wrong answer are bounded. This is the right starting point for most organizations.

CIO focus: Define an acceptable-use policy before rollout. Employees need to know what data can go into which tools. Set clear expectations around output verification. Govern tool sprawl to avoid a fragmented landscape of shadow AI.

CISO focus: Implement data leakage prevention controls to ensure sensitive internal data is not transmitted inappropriately to external model providers. Conduct third-party vendor validation for any AI tool accessing internal systems. Understand and document data retention and training reuse policies for every provider in the stack.

Quadrant 2 - Internal + Acting: Operational Automation

Once AI moves from recommending to doing, the risk profile shifts substantially. An agent that files, moves, deletes, or modifies things can cause damage at speed and at scale. But the potential upside is proportional. Backoffice process automation is frequently one of the highest value use cases for AI - particularly for organizations without extensive previous experience with AI.

CIO focus: Ensure every agent has a named human owner responsible for its outputs, not just a technical team responsible for its uptime. Define a clear permissions architecture limiting what agents can access and act upon. Maintain sufficient auditability so actions can be traced and explained. Require rollback capability within workflows as a deployment prerequisite.

CISO focus: Apply least-privilege principles to agent permissions as you would to human users. Require human-in-the-loop for destructive or irreversible actions. Ensure production systems are air-gapped from environments where agents operate. Monitor for anomalous agent behavior. For example: an agent accessing data outside its normal scope is a meaningful signal.

Quadrant 3 - External + Advisory: Customer-Facing Intelligence

Moving outside the organizational boundary introduces a step change in risk exposure. You no longer control who interacts with the system, what they feed into it, or how they interpret the output.

A note of caution on classification: The line between advisory and acting blurs when AI output reaches external users. A chatbot helping a customer explore product options is advisory. A chatbot communicating policy details, contractual terms, or entitlements is closer to acting - the output itself carries consequence regardless of whether it executes any system action. When assessing which quadrant a customer-facing system belongs in, frame the question around outcome risk, not system architecture.

If the output can create an obligation, change an expectation, or influence a decision the recipient treats as authoritative, apply Quadrant 4 governance. The Air Canada case is instructive: a chatbot's confident but incorrect statement about a refund policy was ruled legally binding. The system executed no action. The output was the consequential act. For systems that are genuinely advisory - surfacing information to external users who are expected to verify or act on it independently - the risk is still materially higher than in the internal quadrants.

CIO focus: Invest in hallucination containment - retrieval-augmented generation, output validation, and scope limitation so the system cannot confidently answer questions it should not be answering. Define clear escalation paths to human agents for anything outside the system's competence.

CISO focus: Build continuous monitoring for anomalous outputs and usage patterns. A customer-facing system that drifts outside its intended scope can accumulate legal exposure quietly, and passive alerting alone is rarely sufficient to catch it early. Implement prompt injection defenses - external users will probe for vulnerabilities, intentionally or not. Conduct regular red teaming. Treat all external inputs as untrusted.

Quadrant 4 - External + Acting: The Critical Risk Zone

The highest-consequence quadrant. AI acting on behalf of the organization in ways that affect external parties - their accounts, their physical safety, their financial standing, or their legal entitlements - crosses into territory where both the technical and legal stakes are at their highest. This includes systems that take direct action: processing transactions, modifying accounts, executing decisions. It also includes systems whose output is the action - communicating binding information, stating entitlements, or making representations that recipients reasonably treat as authoritative. As noted in Quadrant 3, many customer-facing chatbots belong here even if they execute no system action. The gap between organizational readiness and deployment ambition is most dangerous here.

CIO focus: Require formal validation and structured red teaming before deployment. Maintain explicit fail-safes and human-in-the-loop controls for any consequential action. Understand the full liability chain - what happens legally when the AI makes a wrong call, and ensure that question has been answered before deployment.

CISO focus: Map the system's regulatory classification under applicable frameworks (EU AI Act, sector-specific regulation) early. Validate that automated decision-making restrictions are respected. Conduct AI-specific penetration testing: prompt injection, jailbreaking, context manipulation, tool misuse, and output exploitation paths. Build incident response procedures specific to AI misbehavior - they differ from standard IT incident response.

The Common Thread

Each quadrant demands a different governance posture, but the underlying principle is consistent: match the investment to the consequence. The governance overhead appropriate for an internal knowledge assistant would be disproportionate, and the same overhead applied to an autonomous customer-facing system would be dangerously inadequate. Where organizations go wrong is applying a uniform governance template across all AI deployments, despite deployments having fundamentally different risk surfaces.

This framework also has a temporal dimension. Most organizations do not begin in Quadrant 4. They start where the risk is bounded, build operational confidence, and expand from there. Governance capability, like AI capability itself, develops incrementally, and the quadrant map provides a way to reason about what each next step actually requires before committing to it.

Next in this series

Knowing which quadrant your deployment falls into tells you what governance focus is appropriate. But governance alone does not answer the investment question: where should the budget actually go, and in what order?

In Part III, we translate the governance framework into an investment sequence - where to start, which business areas deliver the most measurable returns, and what realistic timelines look like. >> Continue reading Part III